Duke Researchers Join National Effort to Demystify Deep Learning

$10 million award will explore the mechanisms underlying deep learning algorithms to push AI applications to the next level

Three researchers from across Duke University are joining a nationwide collaboration to crack the coding contortions of deep learning algorithms that fuel artificial intelligence and related technologies that continue to propel society forward at an ever-increasing pace.

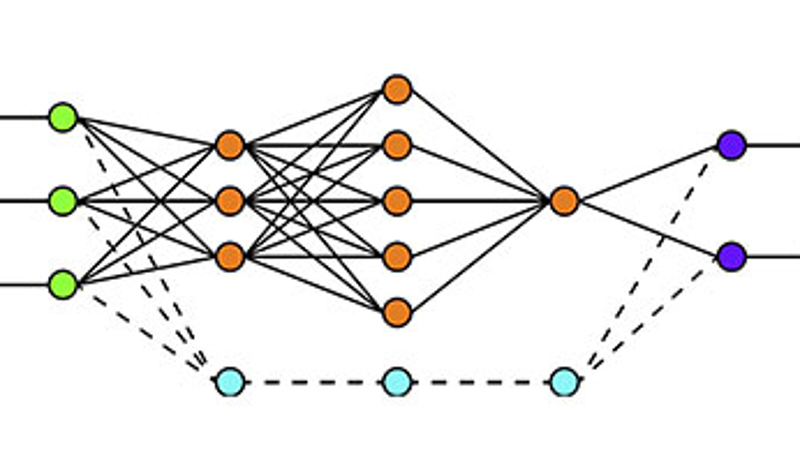

Automated systems, designed to simplify our lives, are everywhere, from customer service chatbots to self-driving cars. The complex and large-scale nature of deep networks, however, makes them hard to analyze, leading researchers to mostly use them as black boxes without formal guarantees on their performance. The design of deep networks remains an art and is largely driven by empirical performance on a data set. As deep learning systems are increasingly employed in our daily lives, it becomes critical to understand if their predictions satisfy certain desired properties.

Led by René Vidal, the director of the Mathematical Institute for Data Science (MINDS) and the Herschel L. Seder Professor in Johns Hopkins University’s Department of Biomedical Engineering, a team of engineers, mathematicians and theoretical computer scientists from multiple institutions will seek to revolutionize our understanding of the mathematical and scientific foundations of deep learning.

Their project, Collaborative Research: Transferable, Hierarchical, Expressive, Optimal, Robust, and Interpretable NETworks (THEORINET), is funded by the National Science Foundation-Simons Foundation’s Research Collaborations on the Mathematical and Scientific Foundations of Deep Learning (MoDL) program and will receive ten million dollars over five years. The Johns Hopkins-led consortium is one of only two teams that were awarded funding for this national effort.

Joining THEORINET from Duke are Guillermo Sapiro, the James B. Duke Distinguished Professor of Electrical and Computer Engineering; Ingrid Daubechies, the James B. Duke Distinguished Professor of Mathematics and Electrical and Computer Engineering; and Rong Ge, assistant professor of computer science and mathematics. The award is set to sustain Duke’s work with two million dollars over five years.

“The complex and large-scale nature of deep networks makes them hard to analyze and, therefore, they are mostly used as black-boxes without formal guarantees on their performance,” explained Vidal. “As deep learning systems are increasingly employed in our daily lives, it becomes critical to understand if their predictions satisfy certain desired properties.”

“NSF and our partners at the Simons Foundation recognize the importance of discovering the mechanisms by which deep learning algorithms work,” echoed Juan Meza, director of the division of mathematical sciences at NSF. “By understanding the limits of these networks, we can push them to the next level.”

THEORINET researchers will develop a mathematical, statistical and computational framework that will explain the success of current deep network architectures, understand their pitfalls, and guide the design of novel architectures with guaranteed robustness, interpretability, optimality, and transferability.

Another goal for the project is to train a new, diverse STEM workforce with data science skills that are essential for the global competitiveness of the U.S. economy. This will be accomplished through the creation of new undergraduate and graduate research programs focused on the foundations of data science, including a series of collaborative research events. Women and members of underrepresented minority populations also will be served through an associated NSF-supported Research Experience for Undergraduates program in the foundations of data science.

Joining the Johns Hopkins and Duke collaboration are researchers from the University of Pennsylvania, the University of California – Berkeley, Stanford University and the Technical University of Berlin.