‘Embrace the Ditch,’ and Other Lessons Learned in Duke CEE’s Overture Engineering

Civil and environmental engineering students learn to design buildings within less-than-optimal parameters in a collaborative capstone course

We’re sorry, but that page was not found or has been archived. Please check the spelling of the page address or use the site search.

Still can’t find what you’re looking for? Contact our web team »

Read stories of how we’re teaching students to develop resilience, or check out all our recent news.

Civil and environmental engineering students learn to design buildings within less-than-optimal parameters in a collaborative capstone course

On a Star Wars-themed field of play, student teams deployed small robots they had constructed

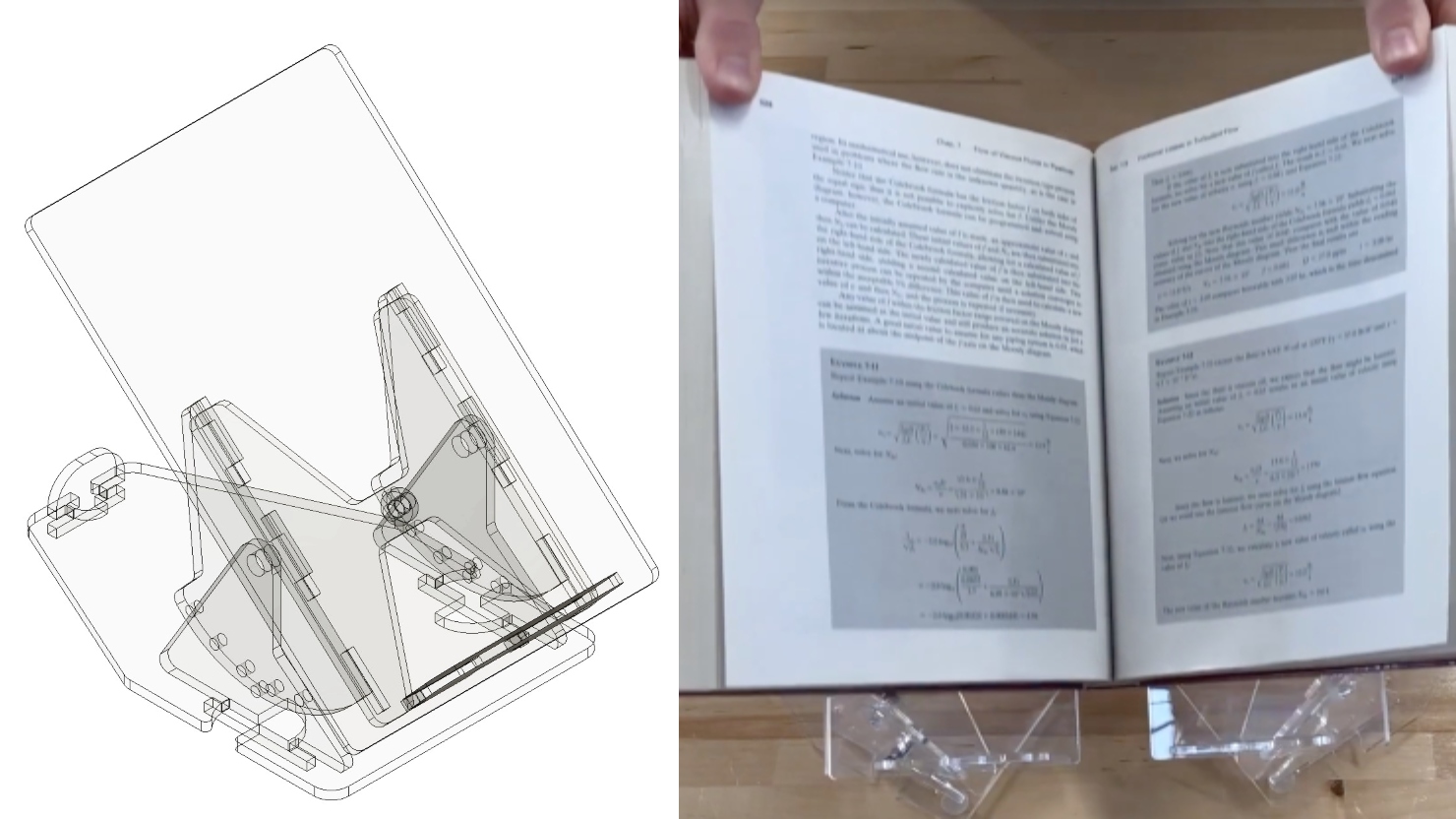

Two projects from First-Year Design course are patent-pending. Student surveys suggest the course also fosters teamwork, leadership and communication skills.

Jan 19

Martin Luther King Jr. Day holiday. No classes are held

Jan 20

Engineering Master’s students: Attend Career Online Drop-In Hours to connect with a Career Coach for quick questions and feedback on your application documents.

12:00 pm – 12:00 pm Online

Jan 20

In this seminar, Dr. Mishrra will describe how integrating concepts from environmental geochemistry, materials science, and water quality engineering is essential for addressing urgent challenges in drinking water supply and […]

12:00 pm – 12:00 pm Wilkinson Building, room 021 auditorium